Persuasion and Social Proof Thresholds

May 14, 2007, 2:42p - Communication

The art of persuasion is a fascinating topic. Persuasion happens on both a small-scale (getting your child to eat her broccoli) and large-scale (getting a majority of people to vote in favor of a certain candidate). On its most basic level, persuasion is about instilling a specific opinion or belief in a person. It's about getting someone to "know" something. Opinions and beliefs seem to form non-coercively in 3 simple ways: - Direct experience

- Compelling principle

- Social proof

If people believe that hiking to the top of Everest is impossible, but you've done it, you'll believe that it is possible - this is knowing through direct experience. If instead no one has ever climbed Everest, but someone tells you that the air is thick enough to breathe at the top, then you might believe that it's still possible - this is knowing through a compelling principle. In this case, no one's ever done it in practice, but there's a theory to support why it can be done in principle. If instead everyone believes that it can be done, perhaps because one person claims to have done it, you may just trust the belief of the group, independent of anecdotes and arguments to the contrary. This is belief through social proof - you believe because others believe.

Note that I'm ignoring belief instillation that is coerced, via threat, bribery, tricks, or other involuntary means. Yes, there is a very fine line between voluntary and involuntary decisions, but let's not worry about that for now.

What I'm going to discuss here is the third method of knowing, social proof. For most people, I posit that most of what they know, what they believe, is not the result of direct experience or even of compelling principle. Most of what they know, they know because someone else, frequently a group of experts or perhaps a trusted friend, tells them it's true. They trust the individuals to tell them the truth, and also trust that the individuals are smart and have done their homework. This method of belief, via social proof, operates in all human endeavors, from news to science to religion. What proportion of yesterday's news did you directly experience? When was the last time you saw a quantum effect? When was the last time God spoke to you?

Now, the specific question I'm trying to answer is this: Given a belief and given a target number of converts, is it theoretically possible for you to meet your target, given social proof as your only tool of persuasion?

In different words: What is the minimum number of people you need to persuade that is sufficient for the majority of people to be persuaded? Here, social proof would provide the momentum that transformed the belief from a snowflake to an avalanche.

This is a bit of a contrived scenario, but I believe that if we can make progress in understanding persuasion in a more controlled environment with fewer variables, we have a chance of better understanding persuasion in the real world.

Depending on your persuasion task, you may need to identify how much effort it will take to persuade the total numbers that you hope to achieve. Of course, you could in principle go out and meet each person individually, but in practice this isn't really feasible. Instead, to maximize your efficiency and the use of scarce resources (your time and money), you want to identify the minimum number of people you need to persuade before a snowball effect occurs and you have enough other people persuading on your behalf.

Of course, the system is dynamical, meaning that this "minimum number" can change over time. So it's not really a tight theory, but it does provide a method of thinking analytically about the problem of persuasion.

Suppose you want to convince voters about a pending law. Your goal is to get the majority of people to agree with you, so that your law will pass in the next vote. How do you measure, beforehand, whether your plight will be met with success? One strategy is to just focus, work hard, and devote yourself and all of your resources to persuading others. However, such a strategy may well fail - you don't know for sure, one way or the other. What would be useful would be to have a better sense of whether you will fail or not, before you commit your life to your endeavor. This isn't true of all endeavors - for some, the meaning is in the process, not in the outcome. But for many, what matters most is that the outcome is achieved. In those circumstances, it would make sense to have an idea of how likely your persuasion is going to succeed, given social proof as your primary means of persuasion.

How do you actually measure your probability of success? The method I propose here is what I call "Social Proof Threshold Theory". This is an idea I've been thinking about for awhile, and it is still only roughly formed.

Different individuals have different thresholds before social proof will change their minds. Social proof depends on both the quantity and the quality of the individuals who believe, and also on the specific belief at hand. For example, if a stranger on the street tells me his name is "John", I will most likely believe him. There's only one person telling me this, and the quality of that person is low (he's a stranger) - however, he is an expert, an authority, on the topic of his name, so I'll believe him. If, on the other hand, I walk down the street and someone tells me that the Cubs won the World Series, I'd be entirely skeptical, given the poor history of the team. If a second person told me, though, and they were independent of the first, I would probably start believing that it was true. Here, on this topic of the Cubs winning the championship, the required quantity of social proof is 2 individuals who are strangers - 1 alone is insufficient. Of course, if a friend told me, and I interrogated him a bit ("You're not wagging my dog, are you?"), then I would probably believe it with just the evidence of one person's opinion - the threshold is lower because the quality of the belief source is higher. Make sense?

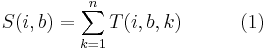

Let's assume a simple function for calculating social proof, for a naive individual i, a given belief b, and a set of believers K. The individual is "naive" because he lacks any knowledge of the opinion at hand. So, we can imagine a social proof function that looks like

Social proof value = Sum of trustworthiness over all known believers

In dense math-speak, this could be described as

"Believers" are those individuals who the naive individual knows believe. It does not include individuals that believe but that aren't known to believe.

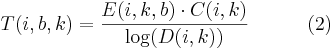

Trustworthiness can be interpreted as a function of perceived expertise, perceived character, and social distance, for an individual i with regard to a believer k and belief b.

Trustworthiness = Perceived expertise on the topic * perceived character in general / log(social distance)

Or, in math-speak

Why a log()? Because we tend to give people who are really close to us a lot of credibility, while this credibility plummets for people just outside this social circle. And if you're really outside the social circle, credibility afforded is not that much different than if you're just outside the circle (a friend of a friend of a friend is only marginally more trustworthy than a friend of a friend of a friend of a friend of a friend of a friend of a friend).

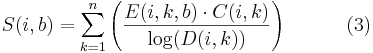

That gives us, in all its abstract glory,

An important simplification has been made here - we haven't deducted any social proof for the presence of active anti-believers, who are at direct odds with the believers and work against them. We could do this too via a subtraction or something else, but let's just simplify that away for now.

So here's the deal - for each individual, one can imagine a set of belief/social proof threshold pairs. The social proof threshold value is the amount of social proof needed to instill a belief in a person's mind. In other words, if the social proof value (as given by equation (3)) is greater than the person's social proof threshold value, the belief is instilled and the person becomes a convert.

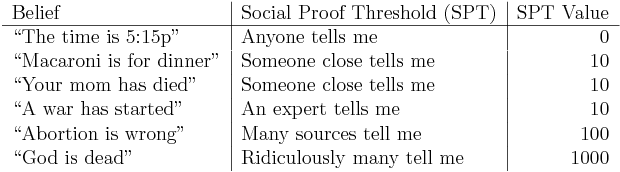

As an example, the table below could be the table of my belief/social proof thresholds when I was 5 years old (naive with little knowledge and few beliefs, but taught not to trust strangers):

- Row 1: Anyone can tell me what time it is, and I'll believe them. My threshold value is 0.

- Row 2: An expert in dinner (e.g. my mom, dad, restaurant owner, etc) must tell me macaroni is for dinner before I believe, or a lot of other people tell me before I believe.

- Row 3: Again, an expert, meaning someone close to the family or a coroner or doctor, must tell me that my mom has died before I believe them. If a random stranger told me I wouldn't believe them.

- Row 4: Again, if a stranger told me a war had started, I wouldn't believe them, but if the newspaper tells me, then I believe it because they're an expert on this type of news.

- Row 5: If just my mom told me that abortion was wrong, I wouldn't necessarily believe her, esp. if my mom and dad disagreed. But if it's a law, which means many "experts" (lawmakers) believe it to be true, then I would believe them.

- Row 6: Nietzsche alone is inadequate for me to believe that God is dead. My parents are inadequate. Even laws are inadequate. Most of the whole world would have to believe it before I believed it.

Make sense? Remember that the SPT value isn't just the number of people who believe, but the result of equation (3) above.

OK, so each individual, for each belief, has a social proof function and social proof threshold value inside his head. So depending on the belief, a different amount of social proof may be required.

So what does this have to do with efficient persuasion? As you may have noticed, there is a bit of self-reference in equation (3). As the social proof thresholds of an initial set of individuals is exceeded, this changes the social proof values for everyone else, inching them closer to their social proof thresholds. So, given a specific belief and knowledge of everyone's social proof functions and social proof threshold values, one can determine whether one's effort at persuasion will be successful.

Here's a tangible way to think about it. Say there are 10 people that you need to persuade, and the only mechanism of persuasion available is social proof. Let's say that you actually believe what you're trying to persuade others to believe (you're the base case - you've got a belief *not* via social proof). If there's no one who will believe because you believe, you're doomed. If, however, the social proof value of your belief is above the threshold for 3 other people (say, your family), you now have 4 people believing (counting yourself). Now, if this new level of social proof is below the thresholds of everyone else, again, you're doomed. But if the new level of social proof is above the threshold of 6 people, then you now have 10 people believing. Success!

Graphically, one can think of this as a histogram, with the x-axis showing the social proof threshold value for a specific topic, and the y-axis showing the number of individuals who have that social proof threshold value. If equation (3) didn't vary on an individual basis, you could tell whether you would be successful simply if the integral of the bar graph from 0 to the previous threshold value is >= the threshold value of the next bucket in the histogram (see the first histogram below). Another way to think about it is that the average value for each bucket must never drop below 0, as you make your way down the x-axis. If it does, you've hit a wall and will not be able to persuade any more people than you've persuaded so far (see the second histogram below).

Make sense? This is extremely simplistic though likely hard to follow in the description above. I do think this framework can give us a useful way of thinking about directing our persuasive efforts. Of course, there are many unknowns. We don't necessarily know the individual's social proof value function (equation (3)) for the belief we care about, and they may not even know themselves. We don't necessarily know how much they think a believer is an expert on the topic, what they think of her character in general, and even how (socially) close they are to her. We don't necessarily know an individuals social proof threshold value for a given belief. And of course, social proof is only 1 of 3 mechanisms of acquiring belief. That said, I think this may be a novel way of analytically thinking about persuasion, and may help spur new thoughts that take us closer to understanding persuasion in an analytical way.

--

Books I've read about persuasion and social change:

• The Power of Persuasion by Robert Levine (thanks Dave)

• The Wisdom of Crowds by James Surowiecki

• How to Win Friends and Influence People by Dale Carnegie

• Tipping Point by Malcolm Gladwell

Read comments (3) - Comment

nikhil

- May 22, 2007, 11:23a

Adam B brought up a good point - the theory above does not include the concept of viralness, and a belief's viralness may in fact prove to be more relevant to conversion than a potential convert's SPT value. If no one talks about a specific belief, it's rate of adoption will be very slow, or it could even die out.

Adam also pointed me to Duncan Watts for more reading: http://www.sociology.columbia.edu/fac-bios/watts/faculty.html

Rajesh Shakya

- Jul 30, 2007, 1:21a

Hi

Very good article on persuasion and Social proof.

Also my articles on persuasion marketing and Social proof may be of interest to you all:

http://www.rajeshshakya.com/principles-of-persuasion-in-marketing.htm

http://www.rajeshshakya.com/social-proof-weapon-of-marketing-influence.htm

cheers,

Rajesh Shakya

http://www.rajeshshakya.com

helping technopreneurs to excel and lead their life!

nikhil

- Aug 2, 2018, 8:38p

On this topic, a recent article in Science entitled "Experimental evidenc for tipping points in social convention." Overall I didn't much like the article, as there was a lack of detail describing their exact experimental setup in the main text (though likely explained better in Supplemental materials, I suspect). The work also begs the question as to how there is ever any stable majority, if only 25% of committed individuals can upset tradition...

http://science.sciencemag.org/content/360/6393/1116

« Arrogant Atheists vs. Everyone Else

-

The Mysteries of Lost »

|